When one American man started using Ashley Madison, the online dating website marketed to aspiring adulterers, he hoped to be connected to women looking for a little fun near his home in Maryland.

Instead, he was messaged by robots designed to keep him spending money on the site, according to a lawsuit he has filed against the company.

“It does feel good to be contacted and to have people interested in you,” he said through his attorney. “Then, it makes you angry when you find out they weren’t even real people to begin with and the only purpose these profiles served was to separate me from my money.

While women can use Ashley Madison for free, men are required to buy “tokens” allowing them to message female users back.

“I spent $250 on Ashley Madison tokens,” he added.

According to a recent report by Korea’s Institute for Information & Communications Technology Promotion (IITP), Ashley Madison artificially created approximately 70,000 female accounts run by robots with artificial intelligence (AI) to make up for how few women actually used the site.

At the same time, Ashley Madison was advertising its abundance of female users.

“Ashley Madison did lie to its customers, but if you think about it another way, it means that current AI technology has improved remarkably,” said Park Jong-hun, a member at IITP who participated in the report. “Nobody can commit actual adultery with a robot, but male users will continuously pay more because they are desperate to have affairs, and are fooled into thinking they can with one of these bots.”

Futurists have long been interested in how robots will be able to mimic human behavior, and recent developments have been remarkable. Apps like Korea’s SimSimi, China’s Xiaoice and Japan’s Rinna allow users to have basic conversations with robots about sports and entertainment, and the bots have a basic sense of humor and the ability to catch certain conversational nuances.

Microsoft, Google and Apple have their own AI technologies in their operating systems, and soon could offer more advanced voice recognition or speaking functions.

But one new development is dating programs that use AI technologies, which are gaining traction in countries like the United States.

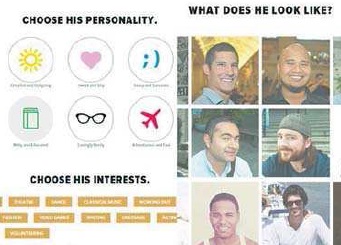

If a user pays a certain amount, he or she can choose a partner based on its virtual appearance, personality and career. Once they are “connected,” they can have conversations like a normal, human couple.

Most customers are looking for someone to show off to friends and family members, or for a way to avoid awkwardness when a concerned relative asks them if they’re seeing anyone.

Olivia Goldhill, a reporter at the Telegraph, tried using one online dating app called Invisible Boyfriend, and compared its responses to those of her actual boyfriend.

Invisible Boyfriend allows you to create the profile of your ideal partner, including his personality and interests.

After Goldhill texted, “My back hurts,” her virtual boyfriend said, “I will give it a nice massage and take that pain away!” He even added, “My day is better now that I’m talking to you.”

Her real boyfriend’s reply? “I had a terrible headache yesterday.”

“IB [Invisible Boyfriend] may not know me personally, but he is being paid for his loving messages – and RB [real boyfriend] is a notoriously bad text conversationalist,” Goldhill wrote in her article.

Machine learning technology, a type of AI, is what allows the Invisible Boyfriend or Ashley Madison bots to keep users coming back. It can teach itself to grow and change when exposed to new data, becoming smarter and smarter in the process.

Korean companies are also getting in on the action. Local start-up Scatterlab recently released an emotion-analyzing app called Textat. Users input texts send by loved ones into the app, which Textat analyzes using its conversation database of more than two billion texts sent by 600,000 people. Then, the app offers users personalized advice about how to respond.

Other companies are getting decidedly more physical. TrueCompanion, a company based in the United States, is expected to release the world’s first sex robot soon. The company claims the robot will be able to express itself through AI technology.

RealDoll, the best-known sex doll manufacturer, is also planning on getting involved in the AI market.

But while texting with a robot seems harmless enough, some experts say introducing sex creates a host of issues, ethical and otherwise.

“[Sex with robots is] something we need to be worried about,” said Kathleen Richardson, a senior research fellow in the ethics of robotics at De Montfort University in England. “You can’t have sex with a machine like you do with a human being […] we’re losing our sense of humanity.”

Nell Watson, a futurist at think-tank Singularity University in the United States, is more ambivalent.

“One of the causes of seeking prostitution is deep-seated loneliness,” she said, “and we can look at whether machines can be a solution for loneliness.”

“Although an AI robot can act like a human, it would not be able to understand complex concepts such as love,” Prof. Lee Kyung-jeon of Kyung Hee University said.

“We also have to worry about securing personal information just in case it gets hacked.”

BY SON HAE-YONG [kim.youngnam@joongang.co.kr]